AI Ethics Research Library.

Curated library with links to research papers and reports relevant to AI Ethics.

AI & Bias

-

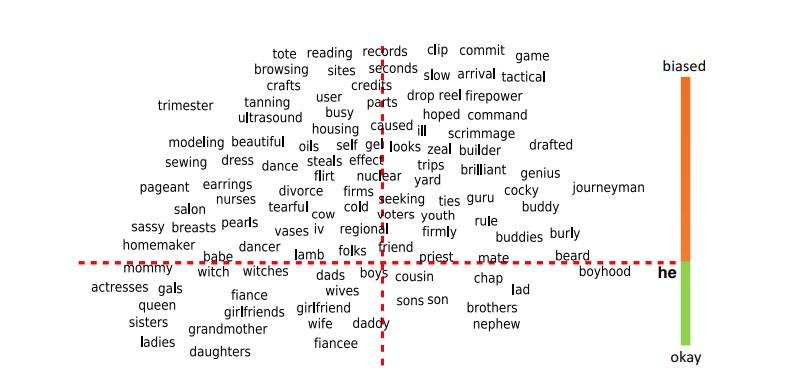

Gender Bias in Large Language Models across Multiple Languages

This paper examines gender bias in LLMs-generated outputs for different languages using three measurements: 1) gender bias in selecting descriptive words given the gender-related context. 2) gender bias in selecting gender-related pronouns (she/he) given the descriptive words. 3) gender bias in the topics of LLM-generated dialogues.

-

Bias in Generative AI

This study analyzed images generated by three popular generative artificial intelligence (AI) tools - Midjourney, Stable Diffusion, and DALLE 2 - representing various occupations to investigate potential bias in AI generators. Their analysis revealed two overarching areas of concern in these AI generators, including (1) systematic gender and racial biases, and (2) subtle biases in facial expressions and appearances.

-

Online Misogyny Against Female Candidates in the 2022 Brazilian Elections: A Threat to Women's Political Representation?

Technology-facilitated gender-based violence has become a global threat to women's political representation and democracy. Understanding how online hate affects its targets is thus paramount. This study analyzed 10 million tweets directed at female candidates in the Brazilian election in 2022 and examined their reactions to online misogyny.

-

Angry Men, Sad Women: Large Language Models Reflect Gendered Stereotypes in Emotion Attribution

Large language models (LLMs) reflect societal norms and biases, especially about gender. While societal biases and stereotypes have been extensively researched in various NLP applications, there is a surprising gap for emotion analysis. However, emotion and gender are closely linked in societal discourse. E.g., women are often thought of as more empathetic, while men's anger is more socially accepted. The reproduction of emotion stereotypes in LLMs raises questions about their predictive use for emotion applications.

-

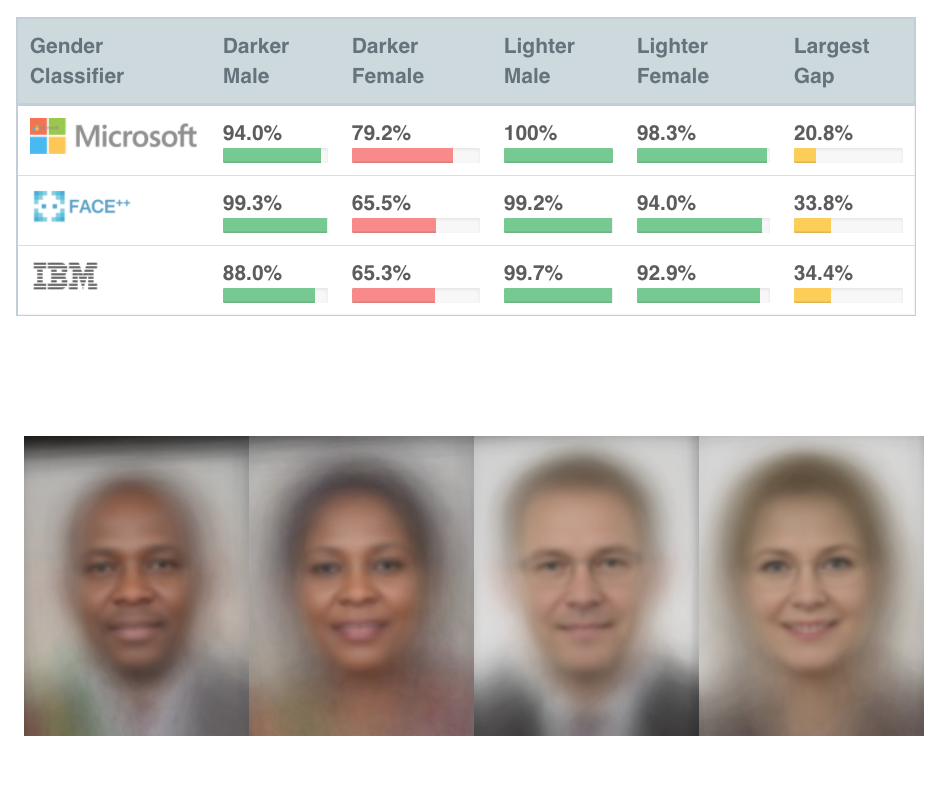

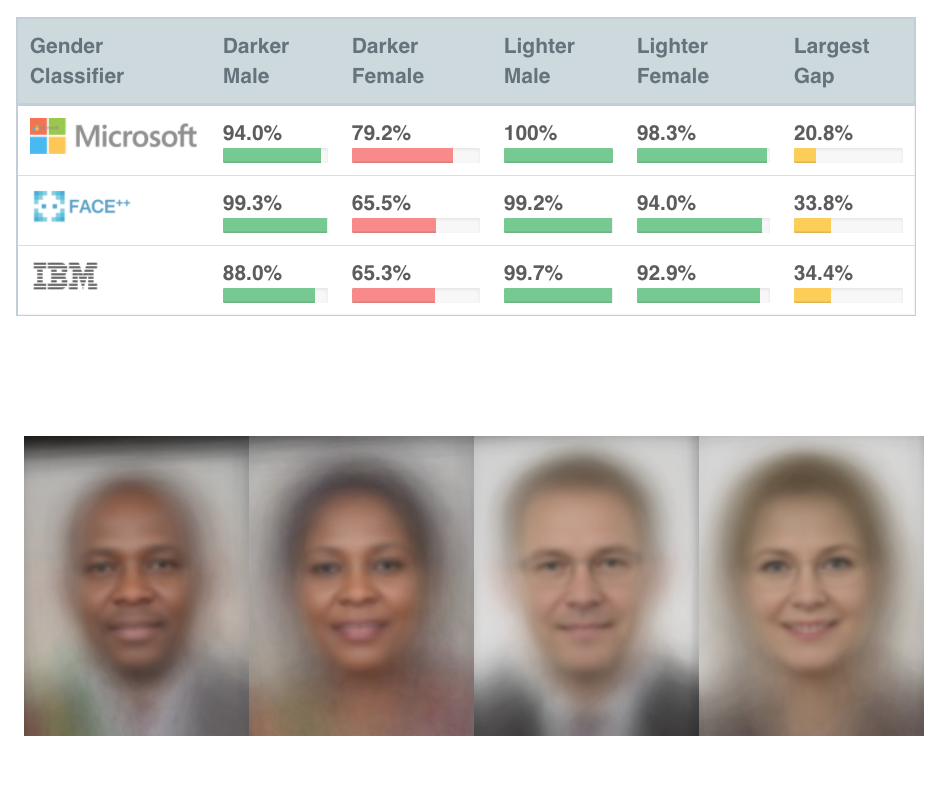

Gender Shades: Intersectional Accuracy Disparities in Commercial Gender Classification

Recent studies demonstrate that machine learning algorithms can discriminate based on classes like race and gender. In this work, this study presents an approach to evaluate bias present in automated facial analysis algorithms and datasets with respect to phenotypic subgroups. Evaluation of three commercial gender classification systems revealed that darker-skinned females are the most misclassified group (with error rates of up to 34.7%).

-

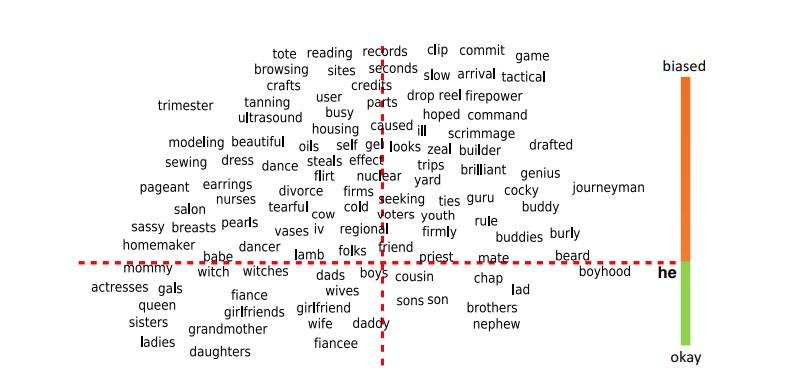

Man is to Computer Programmer as Woman is to Homemaker? Debiasing Word Embeddings

The blind application of machine learning runs the risk of amplifying biases present in data. Such a danger is facing us with word embedding, a popular framework to represent text data as vectors which has been used in many machine learning and natural language processing tasks. This study shows that even word embeddings trained on Google News articles exhibit female/male gender stereotypes to a disturbing extent.

Silicon Valley, History, Culture, & Technofascism

-

Making Silicon Valley: Engineering Culture, Innovation, and Industrial Growth, 1930—1970

How did Silicon Valley emerge as a major industrial district? What role did the military and Stanford University play in its formation? How and why did electronics firms in the Valley supersede their eastern competitors in the component business?

-

Silicon Politics, from Puritan Soil to California Dreaming

The chummy relations between Silicon Valley and Washington, DC during most of the Obama era underscored how politically influential West Coast technology leaders had become, possessing power to shape federal policy and spending priorities that matched if not exceeded that once held by the midcentury high-tech Brahmins who advised presidents and secured multimillion-dollar federal contracts.

-

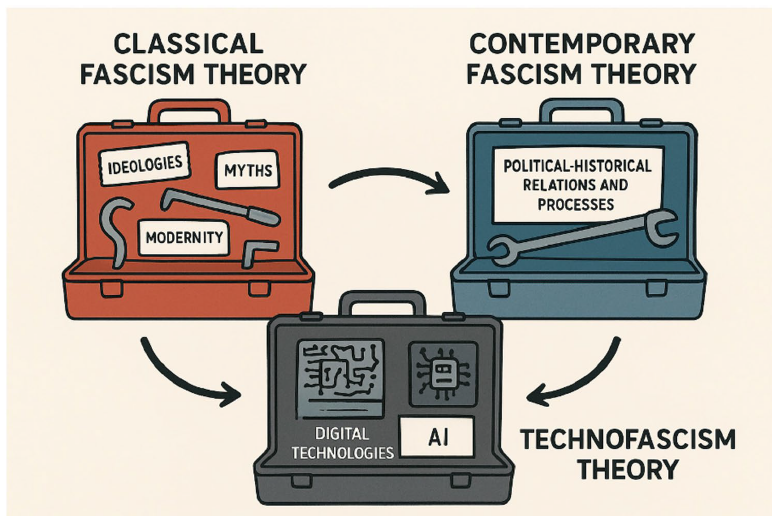

Technofascism AI, Big Tech, and the new authoritarianism

Drawing from the classic philosophical and political theory literature on fascism, authoritarianism, and totalitarianism, this paper argues that and how features of contemporary digital technologies, their governance, and their political context mirror features of fascism. It concludes with a call to resist these new, anti-democratic forms of governance and domination, and make more systemic changes to both the development of technologies and the governance of tech and society.

-

LLMs and Crisis Epistemology: The Business of Making Old Crises Seem New

The popular misconception that AI-related crises are unprecedented is an example of what Kyle Whyte calls ‘crisis epistemology,’ a pretext of newness used to dismiss the intergenerational wisdom of oppressed groups. If AI-related crises are new, then what can we learn about them from oppressed people’s histories? Nothing. I argue that oppressed groups (rather than billionaire technocrats) should be at the forefront of AI discourse, research, and policymaking.

-

Rentier capitalism, technofascism and the destruction of the common

This paper critically examines the emerging discourse on technofeudalism. While main-stream narratives celebrate technological advancement driven by American tech giants,proponents of the technofeudalism thesis argue that a new ruling class – ‘cloudalists’ –has supplanted traditional capitalists, extracting data and rent in ways reminiscent offeudal relations.goes here

AI Hype & Research Integrity

-

A Brieft History of AI: How to Prevent Another Winter (A Critical Review)

AI’s path has never been smooth, having essentially fallen apart twice in its lifetime (‘winters’ of AI), both after periods of popular success (‘summers’ of AI). Researchers provide a brief rundown of AI’s evolution over the course of decades, highlighting its crucial moments and major turning points from inception to the present. In doing so, we attempt to learn, anticipate the future, and discuss what steps may be taken to prevent another ‘winter’

-

Misrepresented Technological Solutions in Imagined Futures: The Origins and Dangers of AI Hype in the Research Community

Centering the research community as a key player in the development and proliferation of hype, this study examines the origins and risks of AI hype to the research community and society more broadly and propose a set of measures that researchers, regulators, and the public can take to mitigate these risks and reduce the prevalence of unfounded claims about the technology.

-

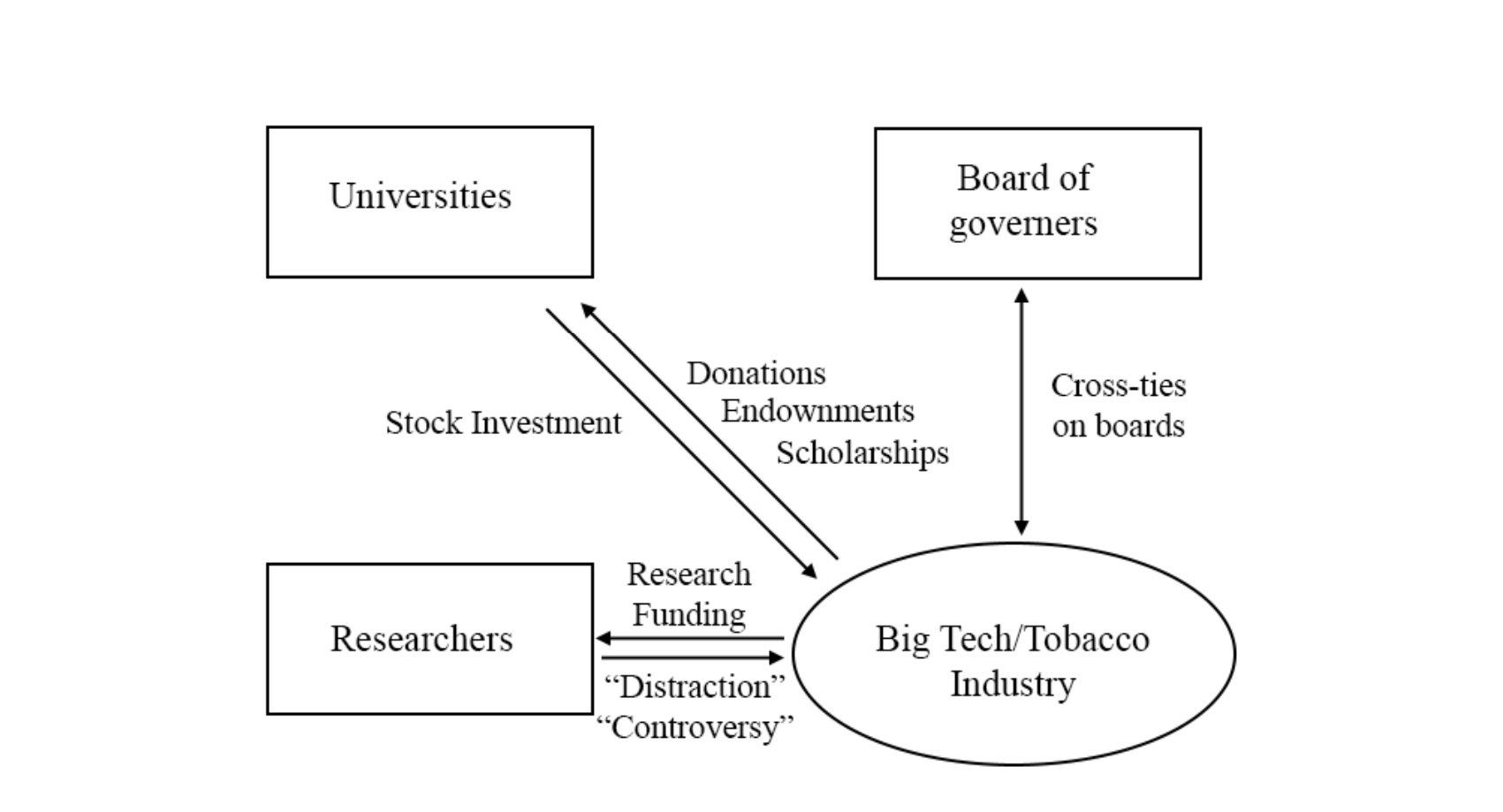

The Grey Hoodie Project: Big Tobacco, Big Tech, and the threat on academic integrity

As governmental bodies rely on academics’ expert advice to shape policy regarding Artificial Intelligence, it is important that these academics not have conflicts of interests that may cloud or bias their judgement. Researchers explore how Big Tech can actively distort the academic landscape to suit its needs.

AI & Bias

-

Gender Bias in Large Language Models across Multiple Languages

This paper examines gender bias in LLMs-generated outputs for different languages using three measurements: 1) gender bias in selecting descriptive words given the gender-related context. 2) gender bias in selecting gender-related pronouns (she/he) given the descriptive words. 3) gender bias in the topics of LLM-generated dialogues.

-

Bias in Generative AI

This study analyzed images generated by three popular generative artificial intelligence (AI) tools - Midjourney, Stable Diffusion, and DALLE 2 - representing various occupations to investigate potential bias in AI generators. Their analysis revealed two overarching areas of concern in these AI generators, including (1) systematic gender and racial biases, and (2) subtle biases in facial expressions and appearances.

-

Online Misogyny Against Female Candidates in the 2022 Brazilian Elections: A Threat to Women's Political Representation?

Technology-facilitated gender-based violence has become a global threat to women's political representation and democracy. Understanding how online hate affects its targets is thus paramount. This study analyzed 10 million tweets directed at female candidates in the Brazilian election in 2022 and examined their reactions to online misogyny.

-

Angry Men, Sad Women: Large Language Models Reflect Gendered Stereotypes in Emotion Attribution

Large language models (LLMs) reflect societal norms and biases, especially about gender. While societal biases and stereotypes have been extensively researched in various NLP applications, there is a surprising gap for emotion analysis. However, emotion and gender are closely linked in societal discourse. E.g., women are often thought of as more empathetic, while men's anger is more socially accepted. The reproduction of emotion stereotypes in LLMs raises questions about their predictive use for emotion applications.

-

Gender Shades: Intersectional Accuracy Disparities in Commercial Gender Classification

Recent studies demonstrate that machine learning algorithms can discriminate based on classes like race and gender. In this work, this study presents an approach to evaluate bias present in automated facial analysis algorithms and datasets with respect to phenotypic subgroups. Evaluation of three commercial gender classification systems revealed that darker-skinned females are the most misclassified group (with error rates of up to 34.7%).

-

Man is to Computer Programmer as Woman is to Homemaker? Debiasing Word Embeddings

The blind application of machine learning runs the risk of amplifying biases present in data. Such a danger is facing us with word embedding, a popular framework to represent text data as vectors which has been used in many machine learning and natural language processing tasks. This study shows that even word embeddings trained on Google News articles exhibit female/male gender stereotypes to a disturbing extent.